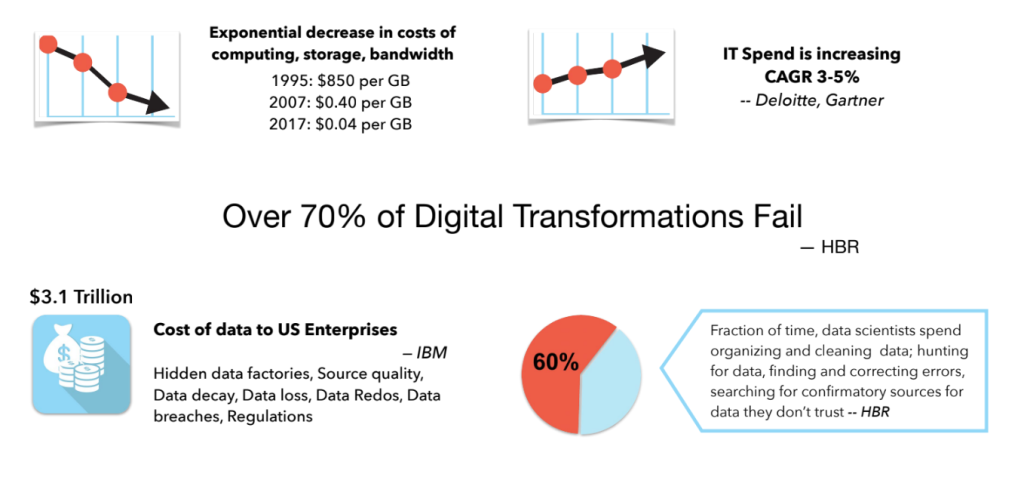

Digital technologies can create tremendous opportunities for growth, increase efficiencies and margins, provide disintermediated access, and improve customer experience at scale. Unfortunately, for many, the promises of digital transformation have not translated to reality. Despite technology platforms, automation, low-code tools, and AI assistants, the costs continue to rise, with significantly high lead times, and no significant return on investments.

Computing, storage and bandwidth prices have seen exponential declines in costs over the years. Programming languages that virtualize hardware have significantly brought down software development and porting costs. The move to the cloud and serverless computing has brought down time-to-market and reduced deployment and operational costs. However, despite declining hardware costs and improvements in programming technologies and platforms, the costs for digital transformation are increasing year over year and yet failing at an alarming rate.

Digital transformation success requires addressing data poverty, data quality, and data fragmentation

A significant factor in the increase in IT services and software development costs is managing and mastering data. While we have been able to virtualize computing, storage, and bandwidth, data is still not virtualized. The volume, variety, and velocity of data still determine the tools and platforms of choice — different platforms, skills, and personnel are required for managing small and big data, structured and unstructured data, streaming and non-streaming data, business and location data, and ML and AI data.

As anyone who has worked with data knows, even with all kinds of tools to manage the volume, variety, and velocity of data, most of the time is spent on establishing the veracity of data sources and confirming data quality. An IBM report estimated this cost to US enterprises at $3.1 trillion annually. It is the small things – names that are misspelled, incomplete addresses, telephone numbers with area codes and some without, incorrect tagging, incorrect linking, images that have to be resized or even redone, locations in coordinates that have to be normalized to lat/longs, identifying duplicates, disambiguating identities, correcting data bias. In addition, the farther downstream the data is processed from the source of data, the higher the costs of correcting and cleansing it.

Why do digital transformations fail at such an alarming rate? Failure is a function of adoption. A bad user experience affects adoption (Read how to create user experiences that will guarantee adoption – Expect More, Accept Less). Bad data also affects adoption. If a customer or citizen cannot access the right information when and where they need it, they will not adopt the service.

To be successful with digital transformation and automation, three questions around data must be answered in the affirmative.

-

- Do you have the data?

In our digital world, data is everywhere. However, there is a clear data divide between those who have and can leverage this data and those who cannot. Data poverty is when you don’t have data or cannot digitally leverage the data you have.

-

- Is the data good?

Good data is correct, consistent, complete, and current. Currency of data requires a continuous process for data collection. A lack of good-quality data results in faulty insights and conclusions, and wasted resources.

-

- Is the data accessible?

Data fragmentation occurs when data is closed and scattered across organizations, business units, tools, systems, and databases. Fragmented data has to be extracted and transformed before it can be utilized which significantly adds to the costs.

To succeed with digital transformation and leverage data into insights and customer-centric applications, we have to first address data poverty, data quality, and data fragmentation.

Data Poverty

Data is everywhere. 2.5 quintillion (2.5 followed by 18 zeros) bytes of data are created every day. When this data is organized, transformed, visualized, and activated, it can create endless opportunities, social equity, and a sustainable world. However, this data is not equitably distributed. There are data hotspots and there are regions of extreme data drought. This has created a digital divide between the data haves and the have-nots, and this gap is widening daily.

Most countries of the Global South are data-poor. Data poverty has both a socio-economic and environmental cost. It widens existing inequalities, limits access to opportunities, and can lead to increased environmental degradation.

In 2014, Myanmar’s census, the first in three decades, revealed that it had 9 million fewer people than estimated. The government’s estimate of the population was a little over 60 million. The last census was done in 1983 and the country estimated a 2% population growth rate by projecting based on the census numbers from 1973 and 1983. The 2014 census established the population as 51 million. Government policies and social investments were made for years based on estimates that were off by almost 20%.

This is the reality of most countries of the Global South. Censuses are expensive and policies often rely on extrapolating data that is decades old. Randomized surveys are often used to fill in data gaps. These surveys keep sample sizes small to manage costs and statistically tend to be last-mile inaccurate. Many National Statistics Offices face funding challenges in upgrading IT systems, capacity building, and data up-skilling.

How do we address data poverty? The facility-based data model provides low-cost and last-mile accurate data that governments can use to establish ground truth that helps them better prepare and manage future disasters, epidemics, and other climate change events. Read more on how countries can address this data divide and measure progress and impact using a facility-based data model – One Earth, One Future – Going Beyond GDP.

Data Quality

Data is everywhere. However, not all data is created equal. The value of data is related to the quality of the data. Data also suffers from temporal depreciation – it starts losing value as soon as you collect it. Poor quality data leads to inaccurate analytics, false insights, AI hallucinations, bad decisions, poor customer experiences, and affects business and developmental outcomes. Good data is complete, consistent, correct, and current.

-

- Complete – Completeness is about the data being comprehensive. Are all points of interest recorded and accounted for? For each record, are all the required attributes filled?

- Consistent – Consistency is about assuring that different data collectors and data sources represent data in the same way and the same formats. If an image is taken, are all images taken consistently in landscape mode? If a telephone number is required, is all data recorded with the country and area code included? Are there duplicate listings? Are all addresses entered consistently?

- Correct – Correctness is about the accuracy of the information. Does the latitude/longitude accurately locate the facility on a map?

- Current – Currency of data has to do with timeliness. Does the recorded data reflect current ground truth? Currency requires certain data to be collected periodically.

AI models are only as good as the data they are trained on. Nations looking to leverage ML and AI solutions need clean, reliable, and high-quality data. An AI model’s behavior is not determined by its architecture, the number of parameters, or the token count. It is determined by the quality of the dataset.

How do we address data quality issues? By ensuring that the data is complete, consistent, correct, and current. Read more on how nations can address last-mile data quality issues using a continuous data collection process and the Metraa Framework – Achieving SDGs – An Actionable Framework.

Data Fragmentation

Data is everywhere. However, it is not equally accessible. Most data is closed, and captive in applications within enterprises, across business functions within an organization, or across organizations. Data fragmentation occurs when data is available, but closed and inaccessible.

Fragmented data rarely provides the big picture and at best can only deliver fragmented analytics and possibly misleading insights. The industry response to unlock the value of fragmented data is to de-silo the data, transform and normalize the data into one giant data warehouse, and then run analytics on the data warehouse. This just creates another silo with the context and content owners far removed from the analysis.

One of the reasons for the high costs of the healthcare industry today is fragmented data and incompatible IT systems across healthcare providers and insurers. Patient records are siloed and unavailable across providers and payers. In many countries of the Global South, it is not unusual for senior citizens to carry binders of past diagnostic tests, prescriptions, and procedures to physician visits. Physicians rarely have time to look through binders; new tests are ordered, new drugs are prescribed, and the binder gets thicker. The lack of an integrated healthcare system where patient records can be accessed seamlessly across providers and payers inflates waste and costs, leading to medical misdiagnoses, repeat testing, inappropriate medications, and polypharmacy.

Fragmented data significantly increases costs. Read how fragmented data is one of the reasons for the high cost of healthcare – The Missing Middle – Towards Universal and Affordable Health Coverage.

Data cannot be an afterthought. Success with data requires well-defined data models that ascribe semantics to the data, a continuous process of collecting and updating that ensures currency of data, and a well-defined open data exchange protocol that allows data to flow seamlessly across business units within an organization and across organizations.

When data is open, and services and solutions are integrated around a common data platform, businesses and governments will be able to manage their resources efficiently, deliver services faster, and provide transparency into policies and actions, allowing for bottom-up citizen and private-enterprise driven initiatives that create new opportunities and improve the ease of living for customers and citizens.

Digital Public Infrastructure (DPI) is an open source and open data architecture that provides a population-scale platform to address data poverty, data quality, and data fragmentation.

Digital Public Infrastructure

Digital Public Infrastructure (DPI) is a transformational approach to open source and open data that delivers progress at population scale for nations, provides transparency into actions, makes governments responsive, and improves the ease of living of its citizens. It allows for data to be accessed seamlessly across organizations and connects people with people, with businesses, and with local, regional, and national governments.

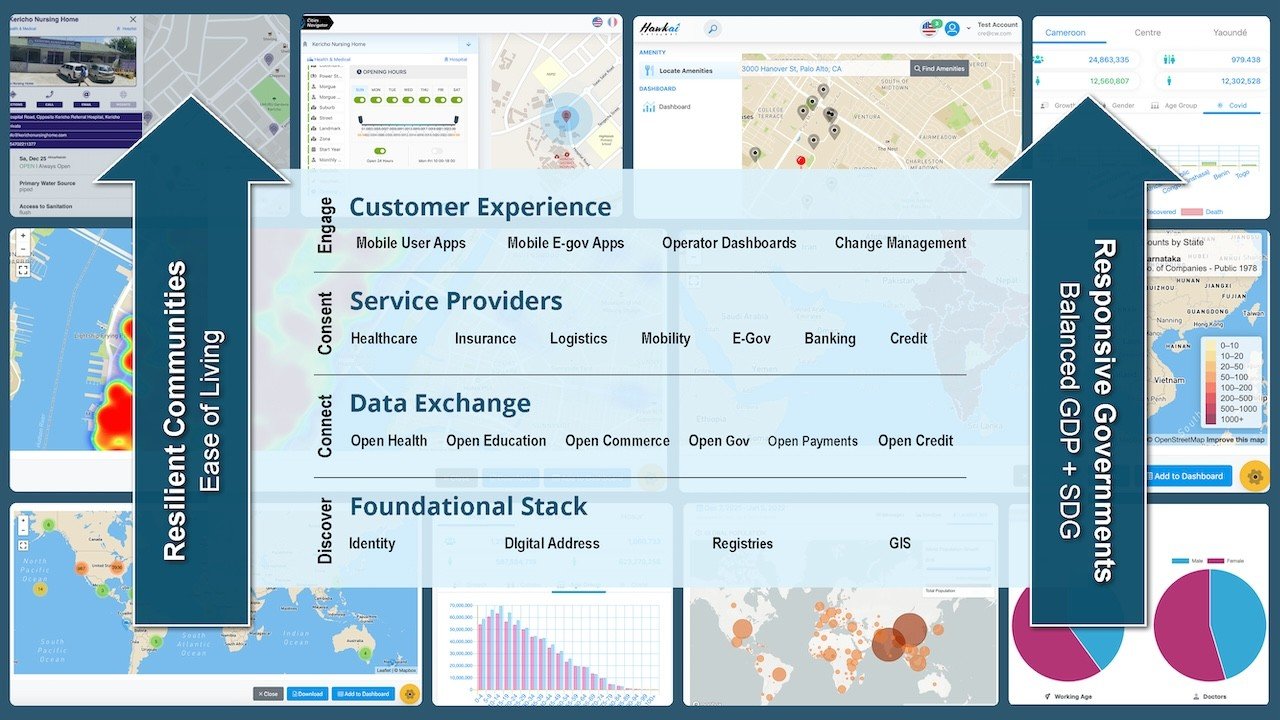

DPI Architecture

DPI is a layered architecture with a Foundational Stack that provides the building blocks and open APIs to authenticate, authorize, discover services, and locate assets. Aadhaar, the digital identity service for the #IndiaStack brought down costs for verifying identity from around $12 to 6 cents, which catalyzed financial inclusion and social equity, and enabled portability of services (Read more about the #IndiaStack at Is DPI the next Y2K?). Data registries allow for authorization and services to be discovered. Data registries aligned around UN Sustainable Development Goals (SDGs) will allow governments to better manage national and private assets and provide the agility to respond faster to any future pandemics, natural disasters, conflicts, and climate-change events. Open GIS data provides a single source of truth for the location of assets, and regional, district, and property boundaries.

Applications need data. Data registries provide that data. Open Health applications need health facility registries and health professional registries. Open Education applications need educational facilities and skills registries, Open Commerce applications need supplier and logistics provider registries. Open Gov applications will require birth/marriage/death registries, property registries, and city assets registries (Read more on how open data and open maps are key to effective, accountable, and inclusive institutions – Bangalore Floods – A Call for Open Data).

Registries and open federated data will also allow for nation-level datasets to be de-identified and made available for shared research. The availability of these large tokenized datasets will give data analytics, ML and AI workloads the data volumes to avoid bias, the ability to replicate results independently, and for companies to accelerate development and create localized solutions. The most transformative changes happen when we have the right data.

Above the Foundational Stack is the Data Exchange layer. The Data Exchange layer enables people to connect and transact, with people, with businesses, and with the government. Data exchanges include open health (seamless sharing of health and payment data between providers, payers, and patients), open commerce (seamless sharing data between suppliers, buyers, logistics providers, payment and credit providers, and reverse logistics providers), open credit (seamless sharing data between financial institutions). These data exchanges will make patient records portable, make credit more equitable and accessible, and disintermediate and unbundle order fulfillment for open commerce.

The Consent layer protects an individual’s right to privacy and gives them agency over their personal data. This layer allows individuals to control how their data is used and shared. It ensures that any personal identifiable information is shared only with user consent. It can also notify users when their data is being shared allowing them to approve or reject the request.

The Customer Experience layer is the set of applications that an individual uses to engage with the services. DigiLocker is a mobile application that provides users with digital versions of various documents like driver’s license, birth certificate, educational diplomas, etc. DigiYatra is a mobile application that allows a paperless and document free travel experience for air passengers. Applications built on DPI promise a faceless, paperless, cashless, and frictionless experience that reduce wait-times and enhance customer experience. Change management tools ensure that data is current and operator dashboards allow administration, bookkeeping and analytics.

DPI will boost financial inclusion, provide equitable access to credit, patch leaks in welfare programs, significantly lower cost for healthcare, make businesses more competitive, governments more agile, and accelerate the achievement of SDGs. When data is open and accessible, digital access becomes inclusive, and data barriers fall, it will create resilient communities and responsive governments.

Hawkai Data provides a Customer eXperience Platform (CXP) to quickly prototype, operationalize, and scale applications and services. Start your digital transformation today and create new business and customer experiences using Hawkai Data CXP.

If you have any questions, talk to us at info@hawkai.net, or follow us on LinkedIn at https://www.linkedin.com/company/hawkai-data/, or connect with us at https://hawkai.net.