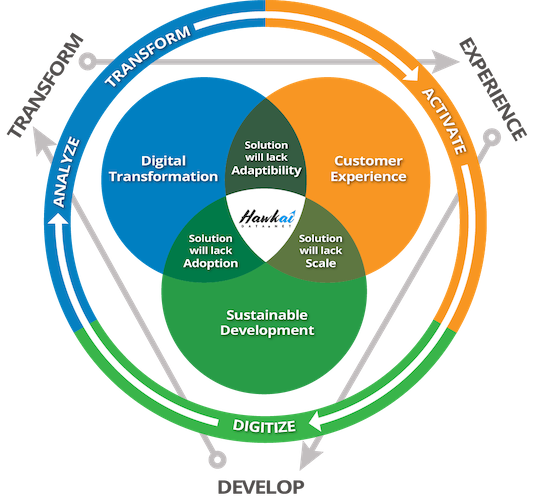

Over 70% of all digital transformation initiatives fail. Projects are considered a failure when stated objectives are not met. The stated objectives are usually to scale the business, improve process efficiency, increase productivity, cut costs, improve margins, or reduce risks.

Failure is a function of adoption. If enough users do not migrate to the digital services and applications, then objectives will not be met. Is there a way to mitigate such risks? Is there a way that we can guarantee the success of a digital transformation?

The lack of adoption could be a result of poor user experience. It could also be a result of the system’s inability to adapt to change. Customers are fickle, their preferences change, the target audience for the product itself may change, and even markets may change. Digital systems must have the agility to rapidly iterate new experiences without impacting adoption.

Digital platforms that can quickly respond and adapt to changes will provide sustainable value over time. For digital transformations to succeed, the underlying technology platform must have the agility to move fast and create new customer experiences as the market changes. It must continue to deliver value sustainably as the system scales. How do we ensure this? By understanding the impact and real costs of the technology choices we make.

Sustaining the Unsustainable

The dark web is a corner of the internet where users can interact and share information anonymously. Using the Tor browser and encrypted communication between multiple onion routers, a user was assured anonymity without worrying about browser histories, browser caches, network-provider or server-side logs. There was just one issue – transacting on the dark web would leave a money trail that could reveal your identity. Bitcoin was created to address this fundamental issue in the dark web – ensuring anonymity while transacting.

Bitcoin was based on a distributed ledger called Blockchain. The dark web is foundationally a decentralized network; every service is by design without any central authority, to ensure anonymity and avoid traceability. Bitcoin allowed marketplaces to flourish in the dark web and that is when the circus started.

Blockchain became the solution to everything, even in the clear web. Once a circus is in town, it is only a matter of time before the clowns show up. Futurists came out of the woodwork and predicted the end of banks and trusted intermediaries. Blockchain was the hammer and every problem a nail. Blockchain, the pundits said, was the technology for maintaining land registries, securing electronic voting machines, enforcing contracts, eliminating escrow agencies, and for some, the answer to even global hunger.

Turns out that blockchain was a solution in search of a problem in the clear web. What was not said, was that blockchain had limits on the amount of data stored, each transaction was several hundred thousand times more expensive than a credit card transaction, and it was extremely slow. You can add data to a blockchain, but you cannot edit or delete it. If something went wrong, there was no trusted entity to call to sort out the issue.

Trusting an entity and using a regular database, it turned out, was much cheaper and more sustainable than storing data on a blockchain. Several countries are experimenting with Central Bank Digital Currency (CBDC), a way of dematerializing bank notes with a digital token. CBDCs will significantly reduce complex maintenance requirements for financial institutions, cut down money transfer costs for people and institutions, and reduce costs for printing and distributing currency notes. Since the digital tokens are backed by a country’s central bank, it is significantly more economical to implement it using a regular database.

A single Bitcoin transaction today consumes over 700 kilowatt-hours. By comparison, a credit card transaction consumes 1.48 watt-hours. 475,000 credit card transactions consume about the same energy as a single bitcoin transaction. Bitcoin consumes around 160 terrawatt-hours of electricity annually, which is about half of what the U.K. consumes in a year. If Bitcoin were a country, it would be among the top 25 countries in terms of electricity consumption. While Bitcoin is the preferred solution for transacting in the dark web, it is clearly not sustainable for the clear web.

In the clear web, a credit/debit card transaction is evidently a much more sustainable solution. In the physical world, however, a credit/debit card transaction requires powered POS terminals and a private secure network. There are over 800 billion credit and debit card transactions that happen every year. This translates to an electricity consumption of 1.18 terrawatt-hours annually. The penetration of POS terminals is limited in large parts of the Global South. As marketplace transactions in these parts become more digital, the volume of credit/debit transactions will significantly add to the carbon footprint. We will need greener and more sustainable options for digital payments in the real world.

India’s Unified Payments Interface (UPI) is a component of the India Digital Stack, a public digital infrastructure, that enables transactions without requiring powered terminals or a private secure network. Sellers display a QR code and buyers use their mobile phones to transact and transfer money. Half a billion people have been brought into the formal economy through UPI. For many parts of the Global South, UPI will create a more sustainable and inclusive digital economy, allow no-cash marketplaces to flourish, and move hundreds of millions into the formal economy.

A solution that works well in the dark web, may not be the most sustainable for the clear web. Similarly, a solution that works well in the clear web, may not be the most sustainable for the physical world.

Show a picture of a cat and a dog to a toddler and she will never mistake a cat for a dog. A machine will need at least 10,000 pictures of cats before it can identify a cat. Another 10,000 pictures of dogs before it can distinguish between a cat and a dog. The same toddler when shown a tiger will identify it as a big cat and a wolf as a big dog. The machine would need another 10,000 pictures of tigers and 10,000 pictures of wolves, all properly tagged as ‘tiger, Family:felidae’ and ‘wolf, Family:canidae’, to classify them and recognize the relationships.

This tagging and annotation is what makes AI work – done by unseen low-paid workers, in remote parts of the world, repetitive soulless work, done without context, meaning, or purpose. Millions of workers, manually tagging and annotating videos, images, and text, which are fed into a neural network that embeds the images and text into an n-dimensional vector space, clustering similar objects together, so that it can distinguish a cat from a dog. This training and tuning a model takes people, time, and money. In comparison, the toddler’s brain, through an evolutionary process over millions of years, can identify and classify objects on less than 10 watts of brain power.

While the toddler’s brain is significantly more efficient at identifying and classifying objects, the AI model excels at finer classifications. The AI model once trained can easily distinguish a Siberian Husky from an Alaskan Malamute. For the toddler, both are dogs.

There are domains where AI models have excelled and outdone traditional programming. Stockfish is a chess engine that originally used brute-force computing and programmed heuristics to evaluate over 100 million positions per second. In 2020, the evaluation function of Stockfish was replaced by a neural network evaluation function. The brute-force of traditional analysis using chess theory heuristics combined with the neural network’s positional knowledge of millions of previously played games, pushed it to an Elo rating of 4200, almost a 1500 point advantage over today’s grandmasters. Stockfish can analyze far deeper and remember more games than any grandmaster making it unbeatable by any human today.

Chess is a well understood game with well-defined rules, and with both players having complete information. This is not true for most real world problems, where you have to be able to work with incomplete information; data is rarely complete, correct, consistent, or current. Additionally, annotated datasets may inherently have bias. To make data usable it must first be cleansed, which is a laborious process. Despite that, every technology solution today is labeled as AI.

AI is the new hammer and every problem a nail. It is the same circus, but different clowns. The world has reached a tipping point where climatic extremes are the new normal. We have not reached here because of bovine flatulence but because of our continued insistence on sustaining the unsustainable.

Imitation is not Intelligence

Descarte was the first to describe the duality of the body and the mind. The body was explainable as a mechanical device, an automaton whose working could be explained. The difference between a machine and the mind, was the mind’s ability to reason and think. Cogito Ergo Sum (I think, therefore I am) – the ability to think was what distinguished the mind from other physical objects.

In 1950, Alan Turing in a paper titled Can Machines Think, proposed a thought experiment for testing if a machine could think. He called it the Imitation Game – a human judge carries a text-based conversation with an unseen computer and a hidden person, and the judge attempts to distinguish the computer from the human, based on their responses. According to Turing, if a machine could reliably mimic a person’s responses, as a corollary, it must be that the machine was capable of thinking.

In 1990, Hugh Loebner created the Loebner Prize, awarding a grand prize of $100,000 and a gold medal to the first chatbot that could pass the extended Turing test that had text, images, and audio components. Held annually from 1991, no chatbot has been awarded the grand prize. In 2016, Hugh Loebner died and in 2019 the Loebner Prize event, in it’s original format, was discontinued. Large Language Models (LLMs) that power today’s chatbots, using transformer-based neural networks, started processing sentences and paragraphs with words understood contextually, only post 2019.

ChatGPT today should easily pass the Turing test. With its deliberate pauses between typing text to give the illusion that it is thinking, occasional mistakes, and its self-deprecating humor, it is hard to tell it’s responses from a real person’s. In a few years, you could call a doctor’s office, do a video consultation with a doctor in your preferred language and not even realize that the doctor does not exist. The doctor might be fake, but it’s answers fairly accurate. You may get your answers, but healing requires understanding and empathy; emotions that cannot be faked by machines.

Consider a different scenario. You are driving down a road and you see a sinkhole open up in front of you. Millions of years of genetics that trained our species to survive will respond in microseconds and make you slam the brakes. You don’t need any training for it. Evolution has trained humans for survival. A Tesla on autopilot will go straight into the hole. It has not been trained for it. It is an edge case too expensive to train. Edge cases kill. A person wearing a raincoat wheeling their bike, a white semi truck against a white sky, stopped emergency vehicles, are all edge cases, and all have unfortunately caused deaths.

Neural networks guzzle energy with their insatiable appetite for computing. To improve accuracy, a model requires more and more data. A measure of the size of a model is the number of parameters it has. OpenAI’s GPT-2 had 1.5 billion parameters. GPT-3 has 175 billion parameters and GPT-4, which includes text and images, has over a trillion parameters. Training a model may take weeks and consumes huge amounts of energy. Once trained, the model may be deployed in data centers, IoT edge devices, and on-board computers of autonomous vehicles. Billions of sensors sensing, billions of edge devices and on-board computers on autonomous vehicles processing the data, and the embedded instances of the models inferencing is increasing global demand for power and will significantly add to carbon emissions.

Every day, more data is being added to the AI infrastructure. With more data, models with larger vector sizes and more parameters, with increased computational ability and fast vector search, will AI models be able to make the jump from knowledge to understanding? Can machines think? Is adding more data the key to discovering new patterns and relationships and eventually unlocking wisdom? The answer to that was provided by Lao Tzu, over 2,600 years ago – to attain knowledge, add things every day. To attain wisdom, remove things every day.

Wisdom is about doing more with less. For AI to get from knowledge to wisdom, these models need to be able to not just recognize patterns, but abstract and reduce them to first principles and derive fundamental truths. Simplify through a process of abstraction and computational reducibility – do the same while taking less power, less computing resources, and less memory. Biological systems through evolution have perfected it, simplifying models through a process of computational reducibility, doing more with less, by abstracting the necessary and forgetting the superfluous and unnecessary. Natural selection has favored neurological efficiency.

The calculator didn’t make everybody a math genius. It actually dumbed down the vast majority. Spellcheck and Grammarly didn’t make bad writers good. It again dumbed down the vast majority. Tesla autopilot is only making bad drivers worse. When humans collaborate closely with machines to supplement their abilities, the human brain does what it does best – forget skills that it deems redundant or superfluous. In our accelerating pursuit towards artificial intelligence, the only casualty is human intelligence.

Adaptability, Scale, and Adoption

Digital transformations fail in the gap between user expectation and user experience. Failure is a result of the promise of digital transformation not matching the experience of the operationalized digital service. Can we create a process that addresses this gap and guarantee the success of a digital transformation?

We can guarantee success if we can activate the user experience early, ensure that the data model is flexible and adaptable to change, and if we understand the impact and costs of the technology platform that we choose. The right model, the right platform, the right experience, and the right process together will guarantee success.

The Hawkai Data platform enables customer experiences to be activated early in the development phase. This allows for feedback from the user experience to be integrated into the development cycle. Once the user experience is validated, the data model can be scaled, and services operationalized. Read more about the Hawkai Data Customer eXperience Platform (CXP) at Digital Transformation Accelerated.

The success of a digital transformation may not translate to business success. Business success is conditioned on a sustainable business model. Sustainability must be the way of doing business and not restricted to a business function. Digitally transforming an unsustainable business model is a waste of time and money.

Take health care. Billions of dollars are spent annually on digitally transforming health care to reduce cost of services and improve patient outcomes. Despite that, there is currently no country that is on track to meet the UN Sustainable Development Goals (SDG) 3 target for universal and affordable health care by 2030. Trust in the healthcare system is at an all time low and patient outcomes are showing a downward trend. Throwing more technology, time, and money on an unsustainable healthcare model will not pay dividends, unless we address root causes.

The only way to meet the SDG 3 target is to change the model for delivery of health care and create a sustainable model that works. This new model needs to disentangle health insurance from employment, change the retail nature of health insurance, and avoid fragmentation of health data. Read our three part series on how the right healthcare model and digital public goods can make health care universal, affordable, and sustainable.

Part 1 — The Rash that Cost $1538

Part 2 — Transforming Healthcare —Better Outcomes at Lower Costs.

Part 3 — The Missing Middle —Towards Universal and Affordable Health Coverage

In a recent survey, 80% of enterprise CEOs polled, plan to deploy AI in the next few years. In a similar poll last decade, 80% of enterprise CEOs were planning to deploy Blockchain solutions.

If you are in the 20% of enterprises that want real solutions, which will generate sustainable growth and deliver continued value over time, talk to us at info@hawkai.net, follow us on LinkedIn at https://www.linkedin.com/company/hawkai-data/, or connect with us at https://hawkai.net.

* Header image uses photo by Alexander Krivitskiy | Unsplash